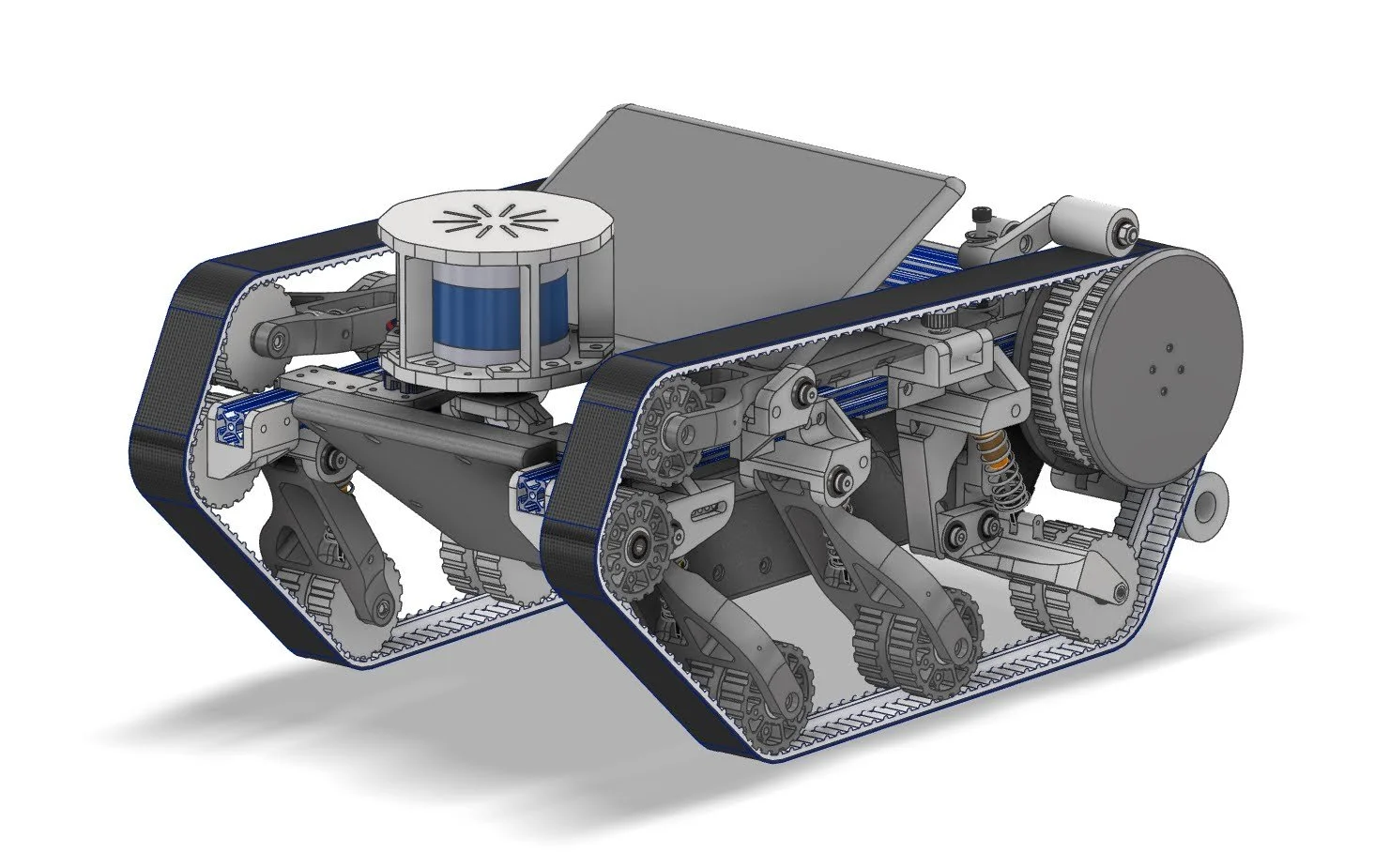

Woodhouse

Academic research in computer vision often involves testing the performance of new algorithms on standardized online datasets. While this makes it easy to benchmark the performance of new research, you miss out on deploying code into the wild, and all the excitement that comes with it. Therefore, I set out to build my own robotic platform that I could use to capture data and navigate around my neighborhood.

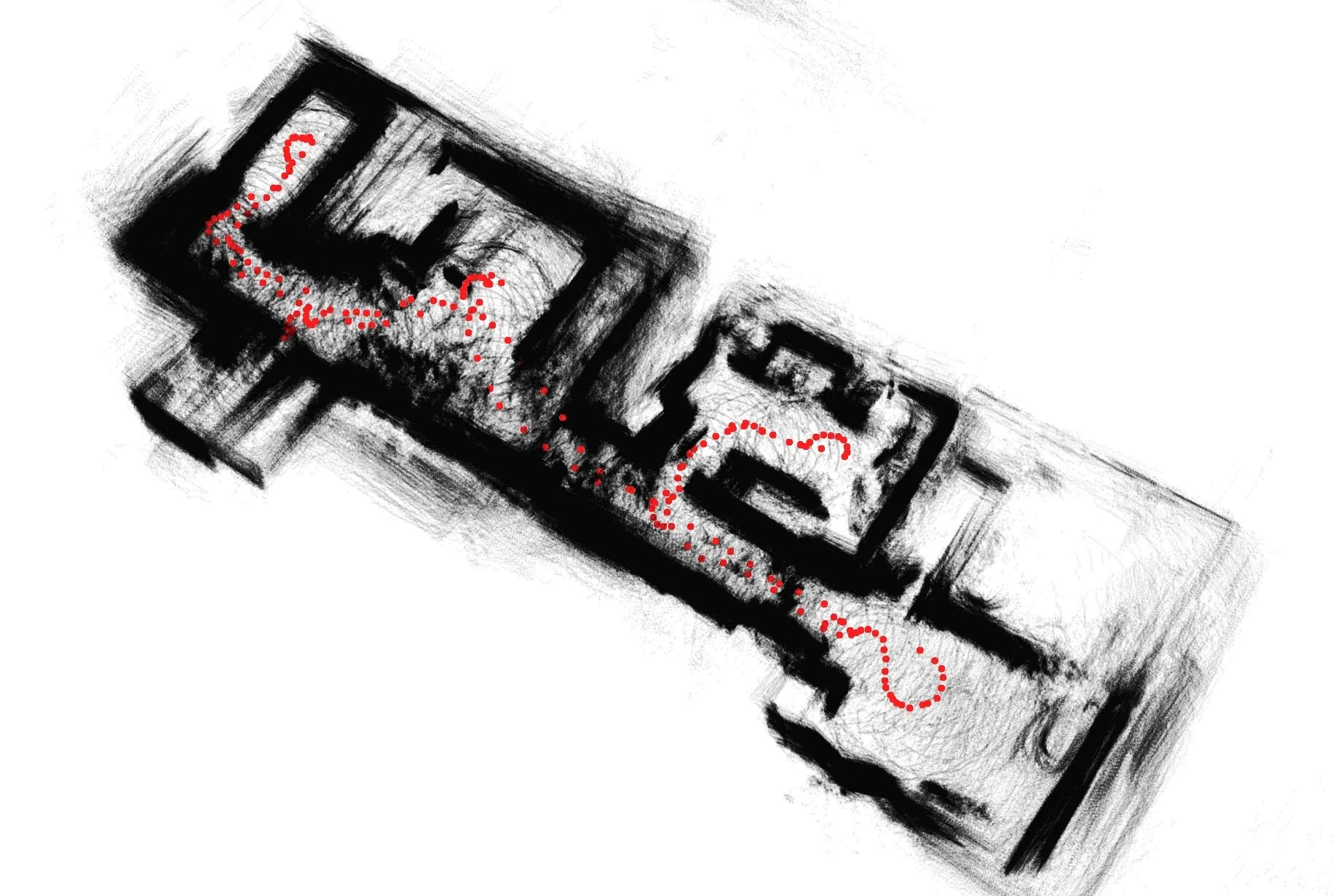

Woodhouse driving down a some stairs outside the Cambridge public library

Live feed of LiDAR data from front mounted sensor

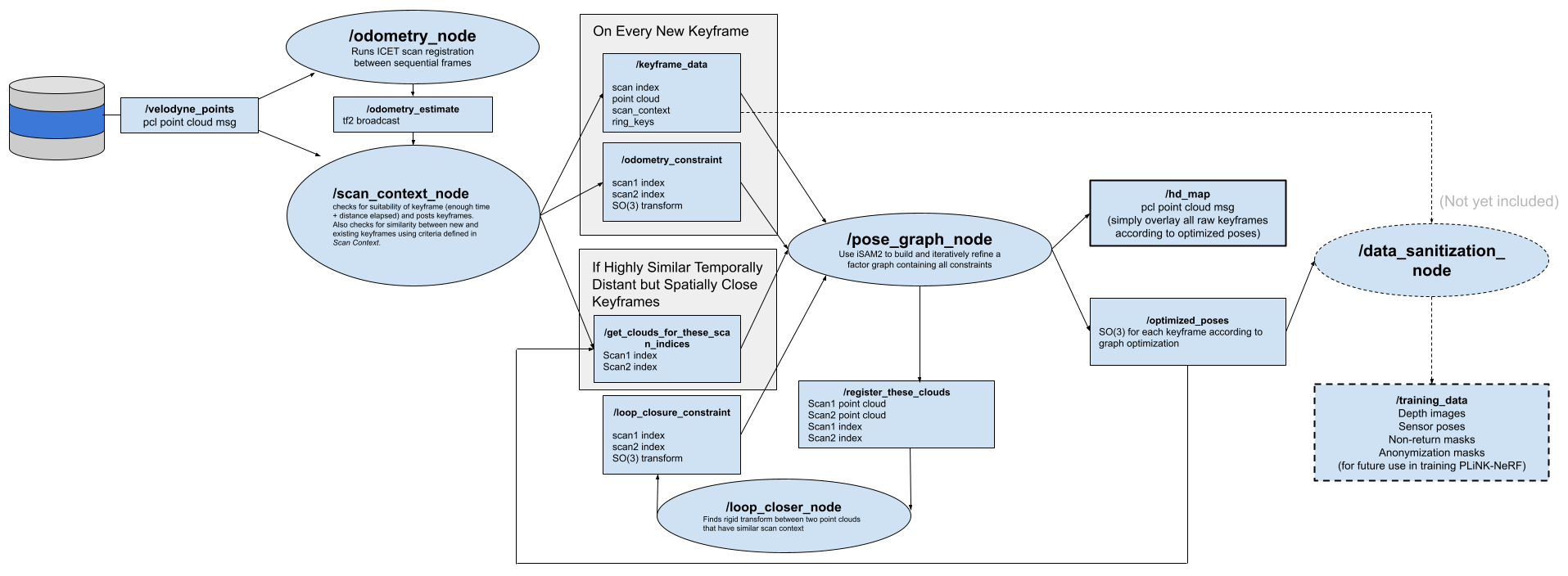

LiDAR SLAM System

As a challenge I wanted to implement my own end-to-end Simultaneous Localization and Mapping (SLAM) system from scratch for Woodhouse. Below on the left, you can see a stream of raw LiDAR data as published from my velodyne puck as I drive Woodhouse around my apartment. On the right, you can see a live estimate of Woodhouse’s trajectory, optimizing in real time as regions of the space are revisited and loop closure constraints are enforced.

Raw LiDAR Data

Live estimate of platform trajectory optimized by SLAM system

It’s not quite on par with state-of-the-art LiDAR Inertial Odometry systems, but surprisingly, the system was able to converge pretty reliably in my cluttered apartment.

At its core, I reused my ICET odometry algorithm and associated ROS package as the front end for the system.